Running A/B tests is one of the most effective ways to optimise your referral campaigns. With the updated test creation flow, you can launch smart experiments in minutes using proven templates or create custom tests tailored to your goals.Documentation Index

Fetch the complete documentation index at: https://docs.mention-me.com/llms.txt

Use this file to discover all available pages before exploring further.

Step-by-step guide

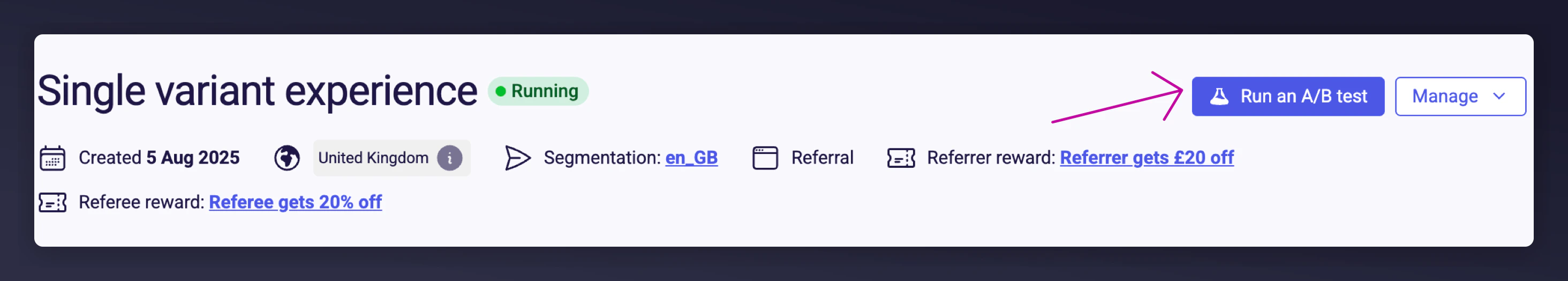

Navigate to the right campaign

Open Mention Me and navigate to the campaign where you’d like to run an A/B test. Click the Run an A/B Test button at the top of the campaign dashboard.

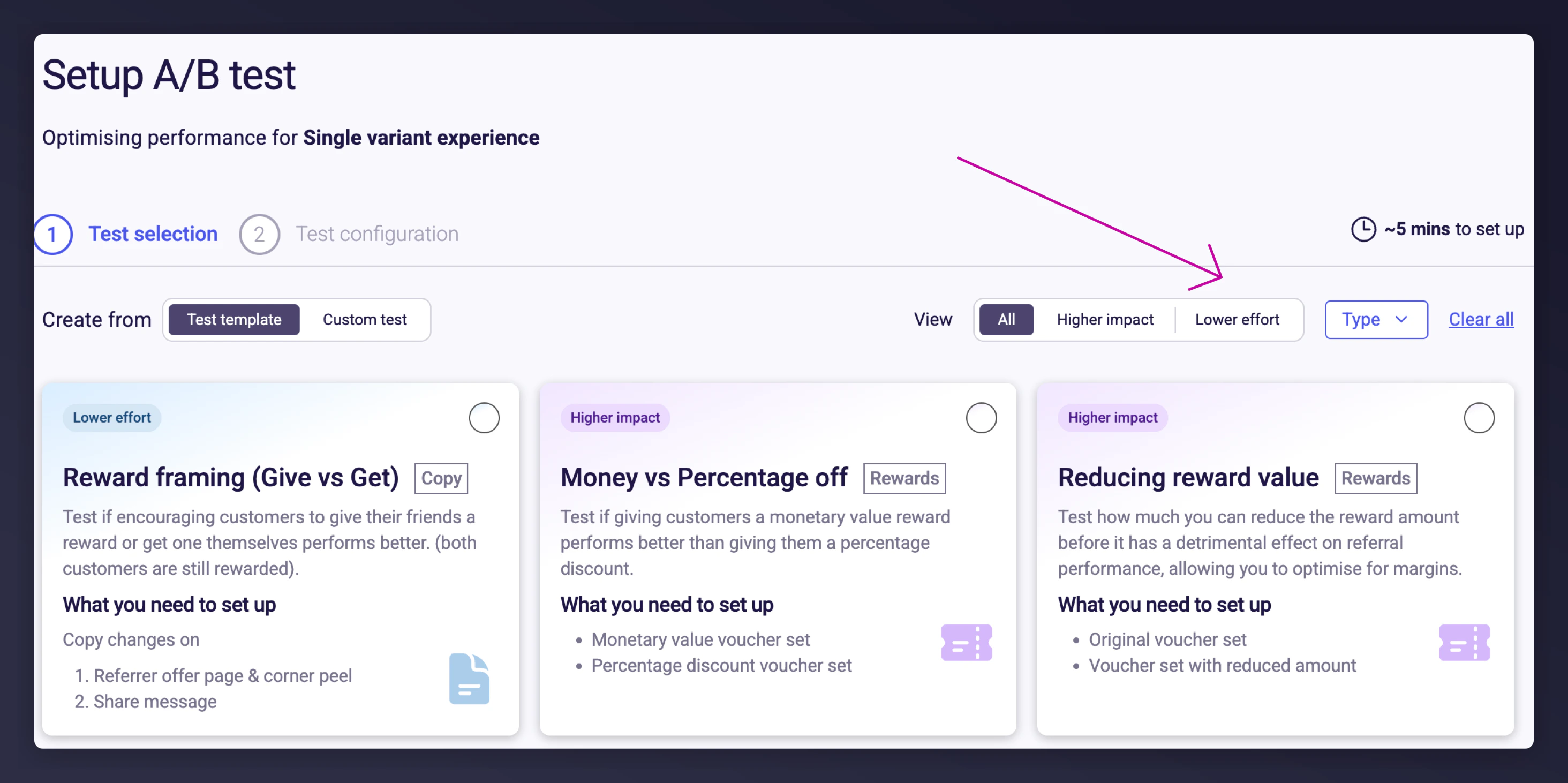

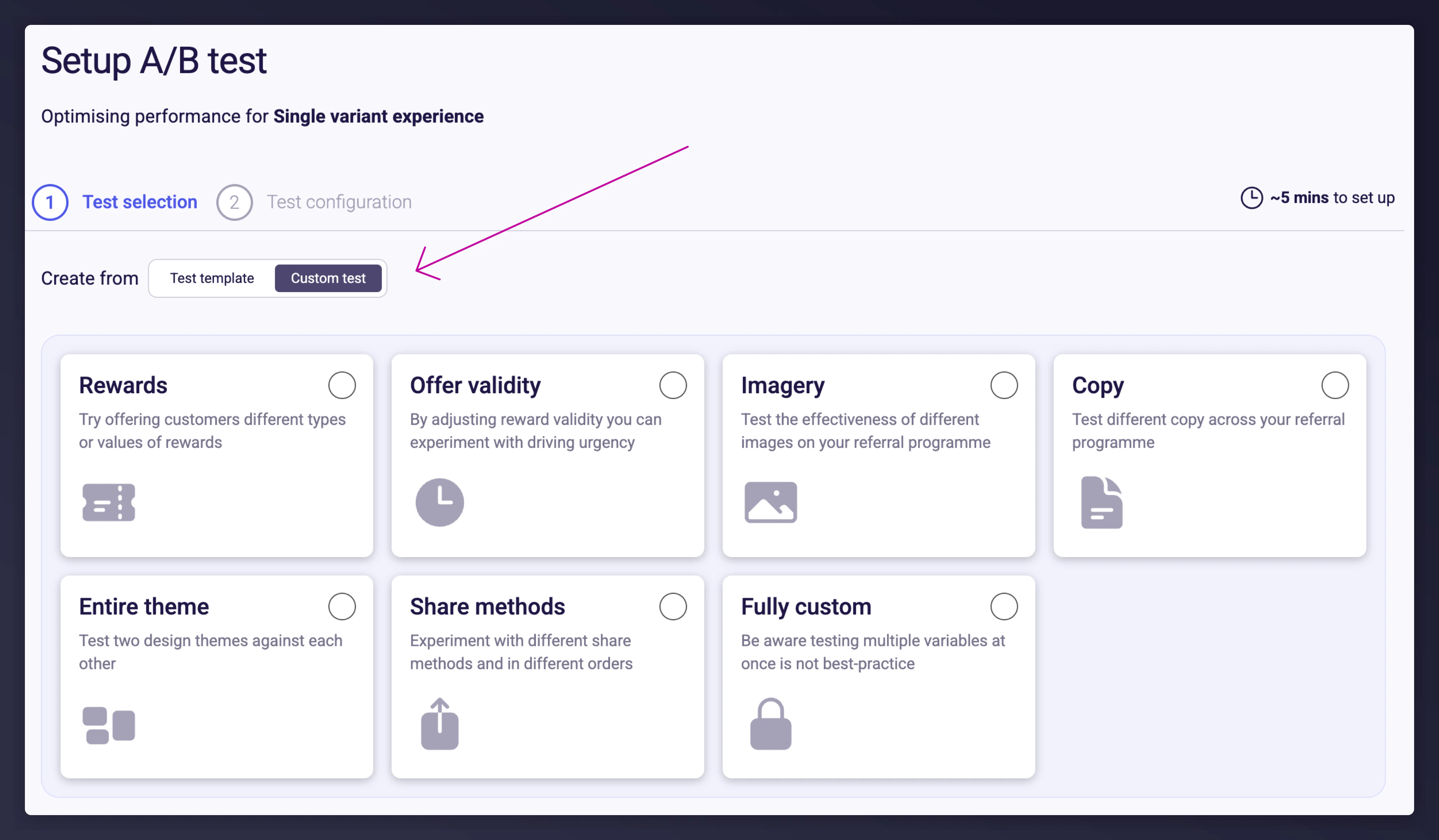

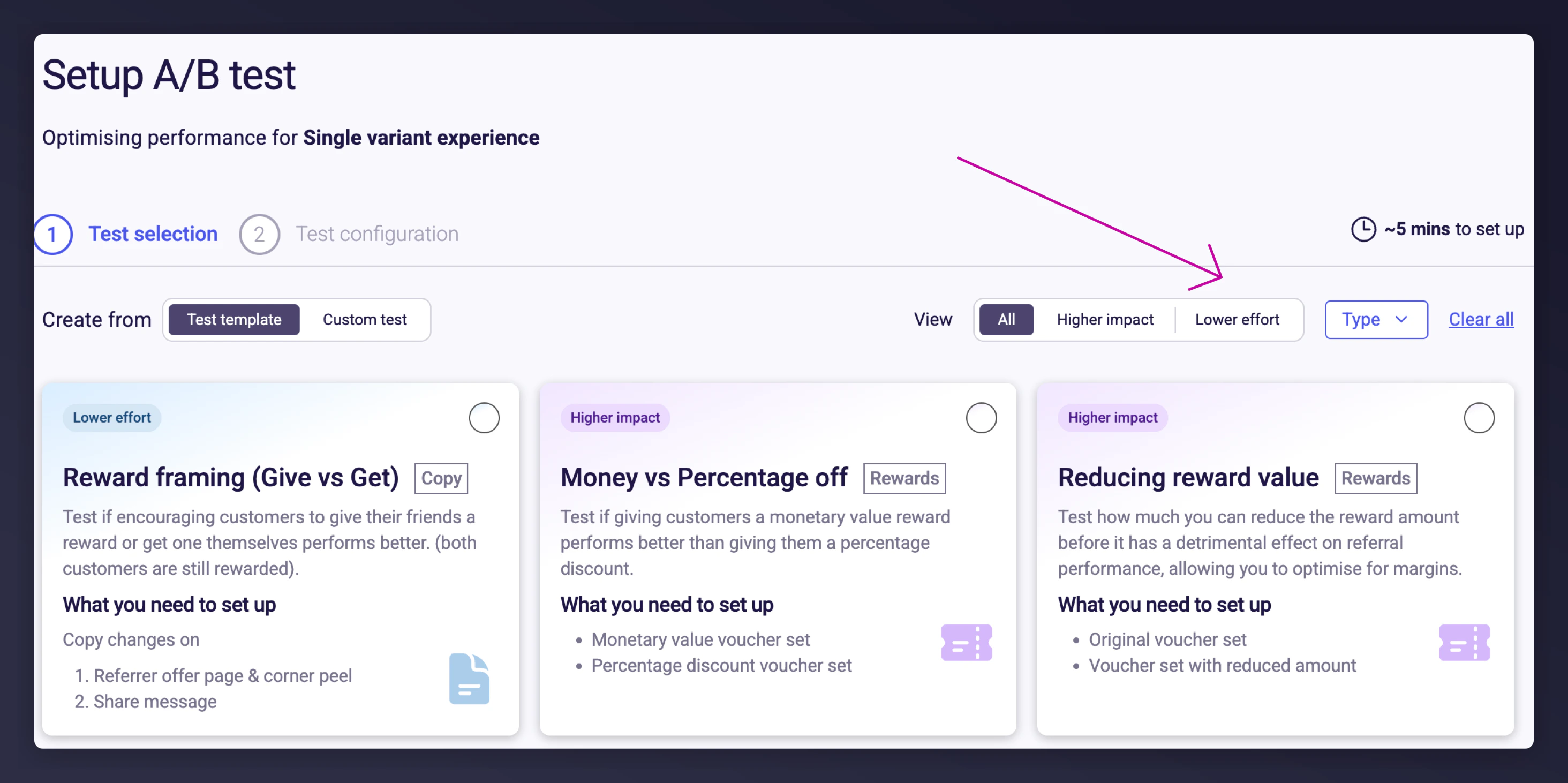

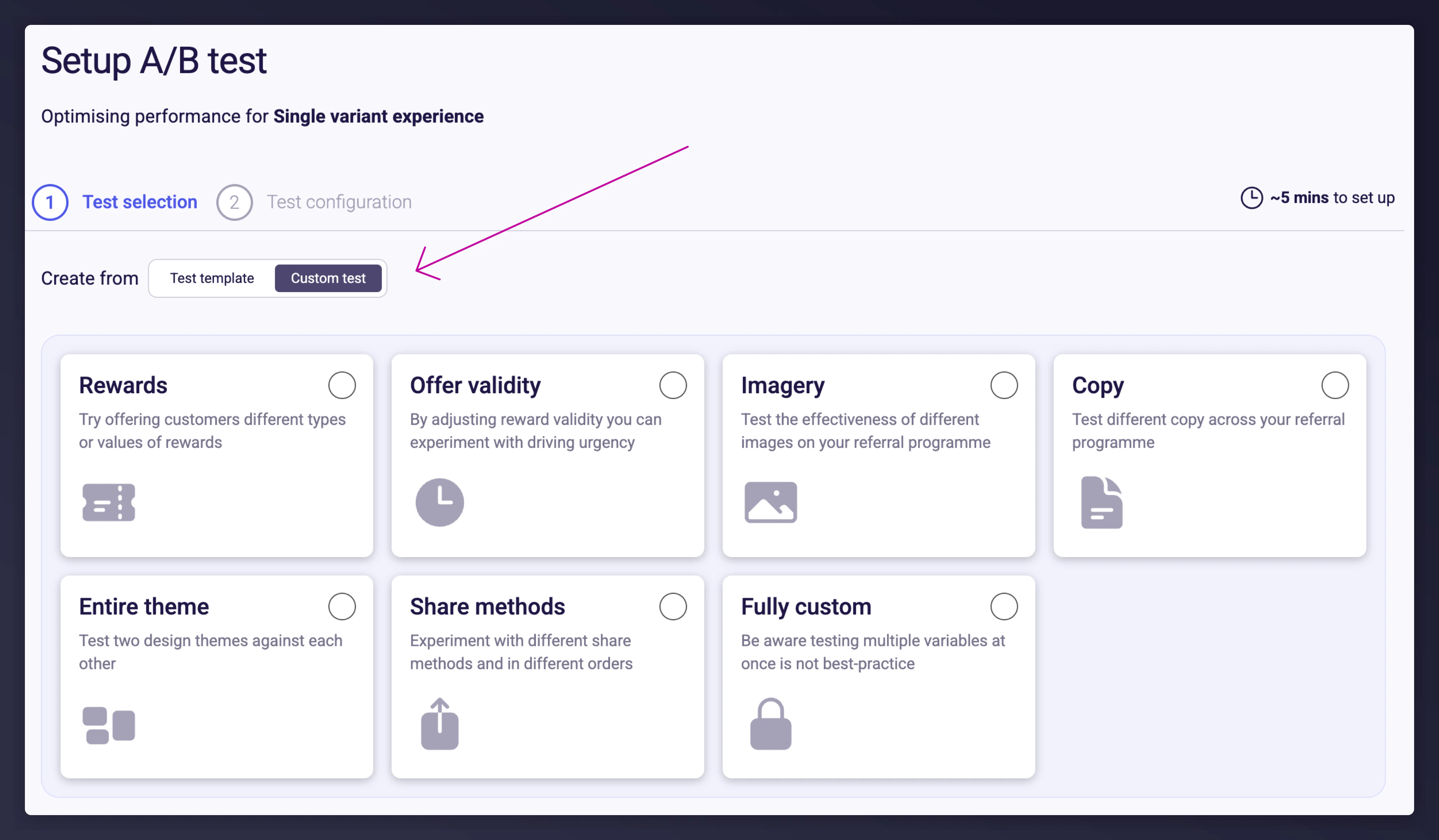

Choose a test template or create a custom one

Select one of the 12 pre-built test templates based on high-performance examples from other brands. Templates are labelled:

- High Impact: Proven to deliver strong uplift

- Low Effort: Quick and easy to implement

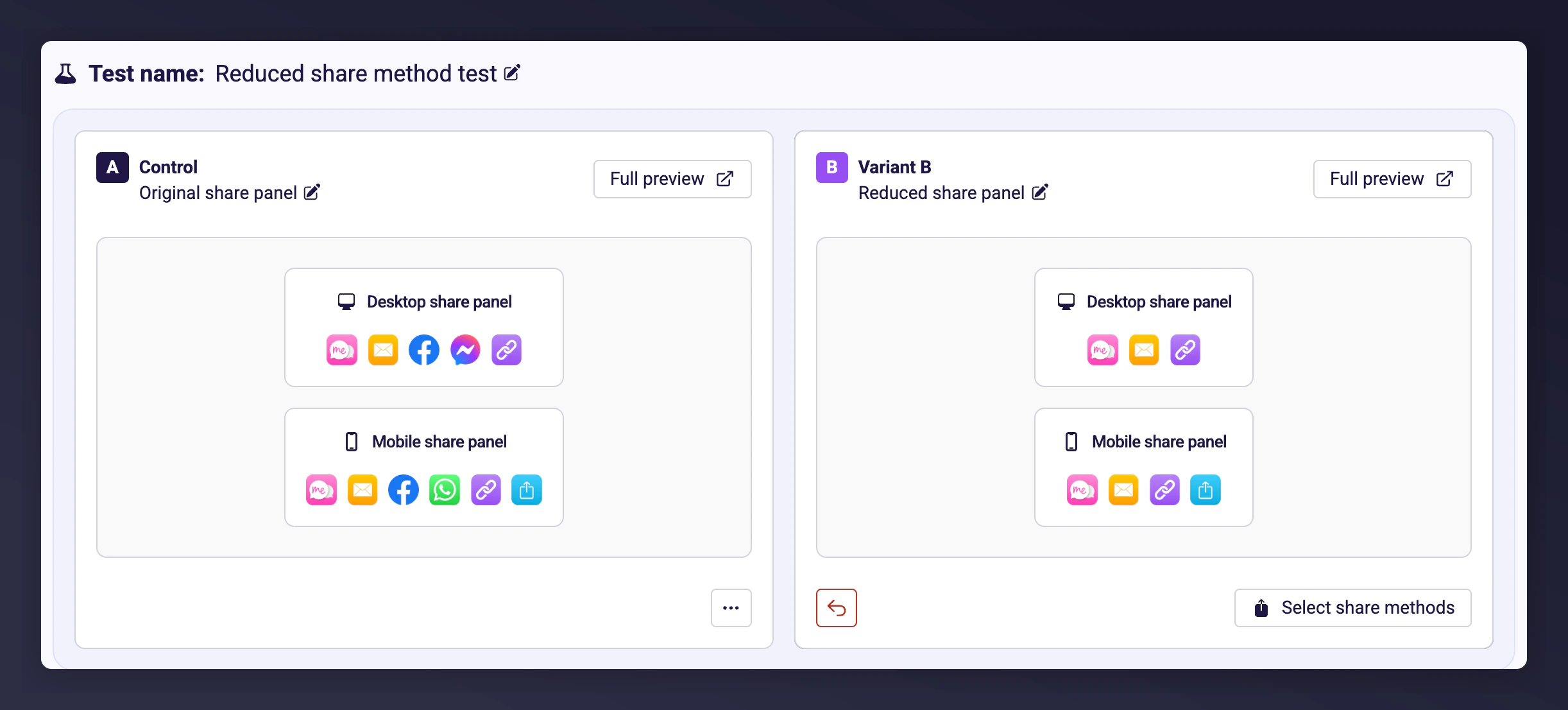

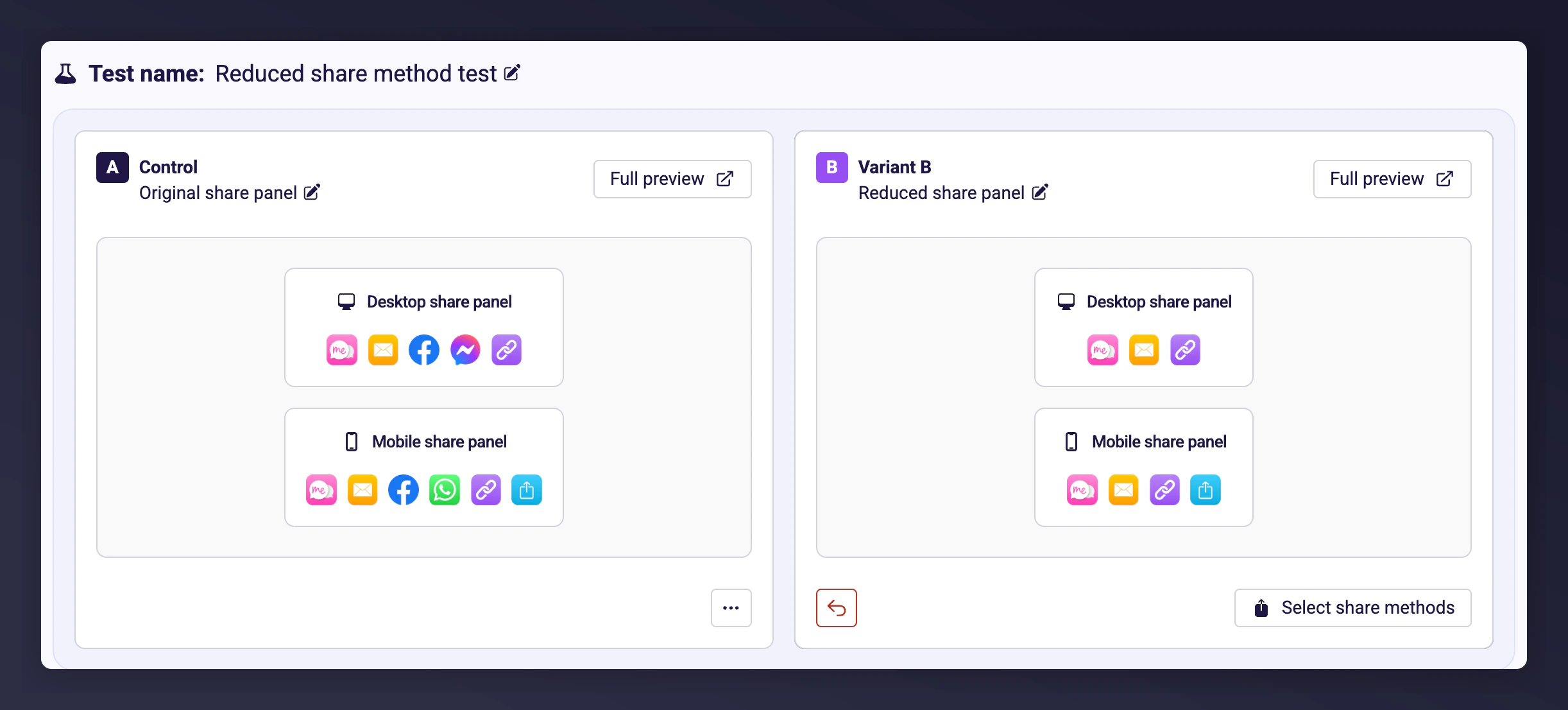

Configure the variant experience

The variant may be pre-filled based on best-performing defaults (e.g. select specific share methods). Use the Select share methods button to customise the variant experience further.

Check the test parameters

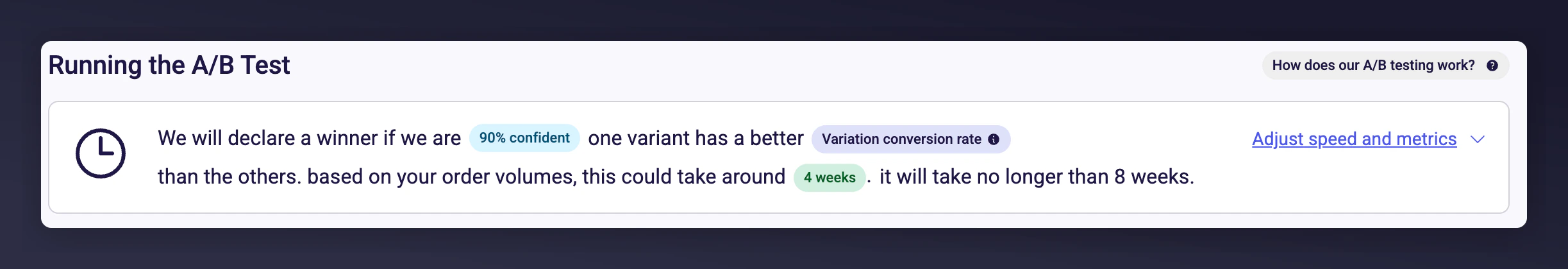

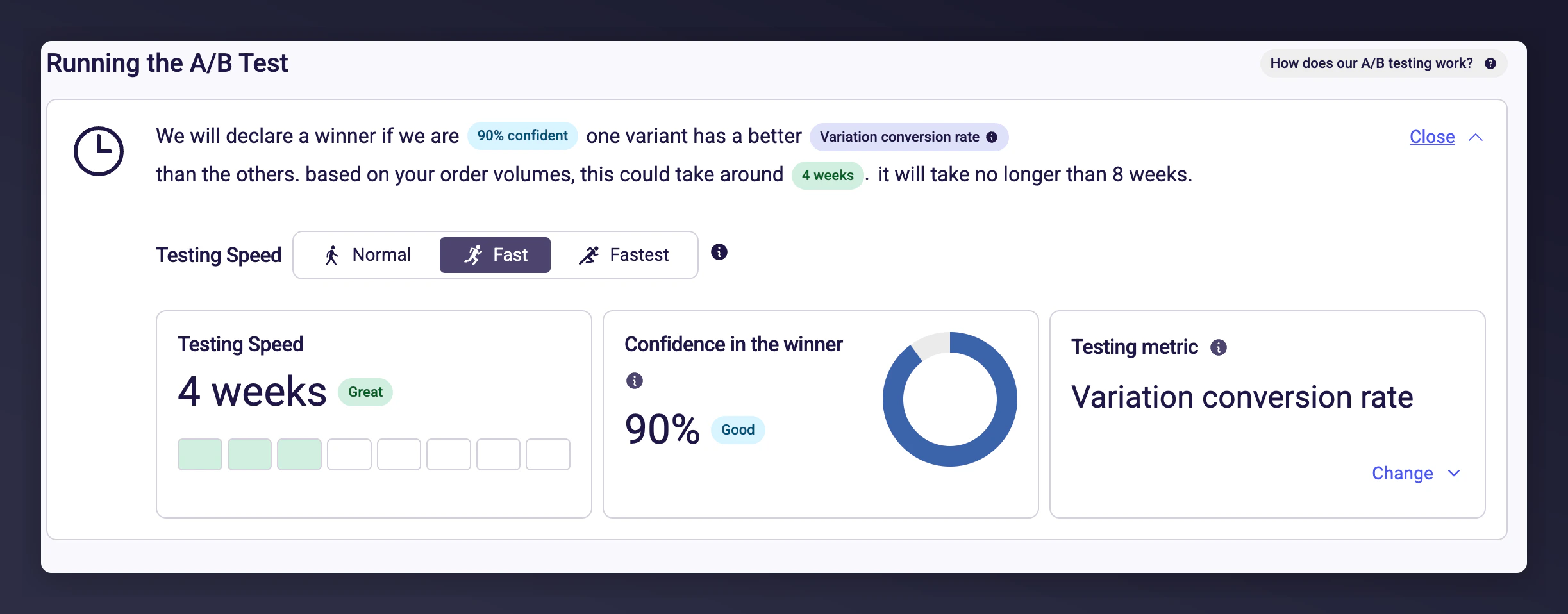

Configure how your A/B test will run. Smart defaults are applied to save time:

- Confidence Level: Automatically selected based on your campaign traffic (e.g. 90%)

- Success Metric: Defaults to variation conversion rate

-

Estimated Duration: Example shows 4 weeks, depending on traffic and goals

- Change Confidence Level (speed vs reliability)

-

Select a custom testing metric

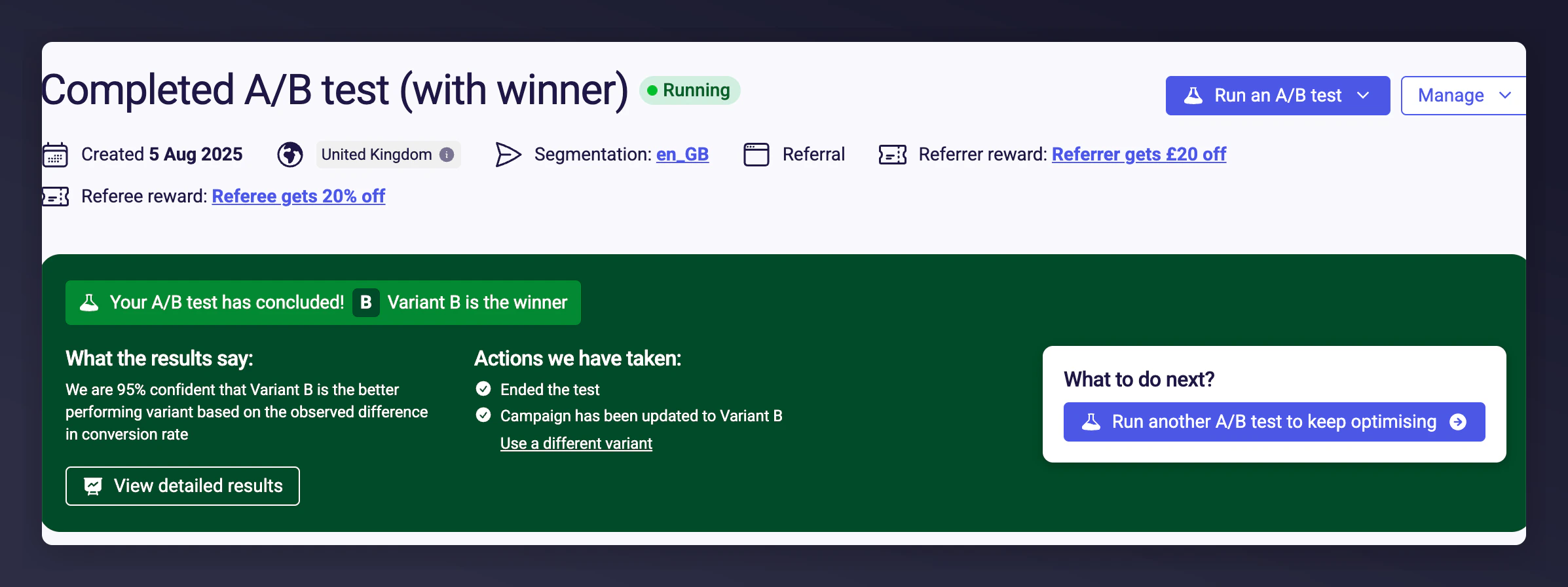

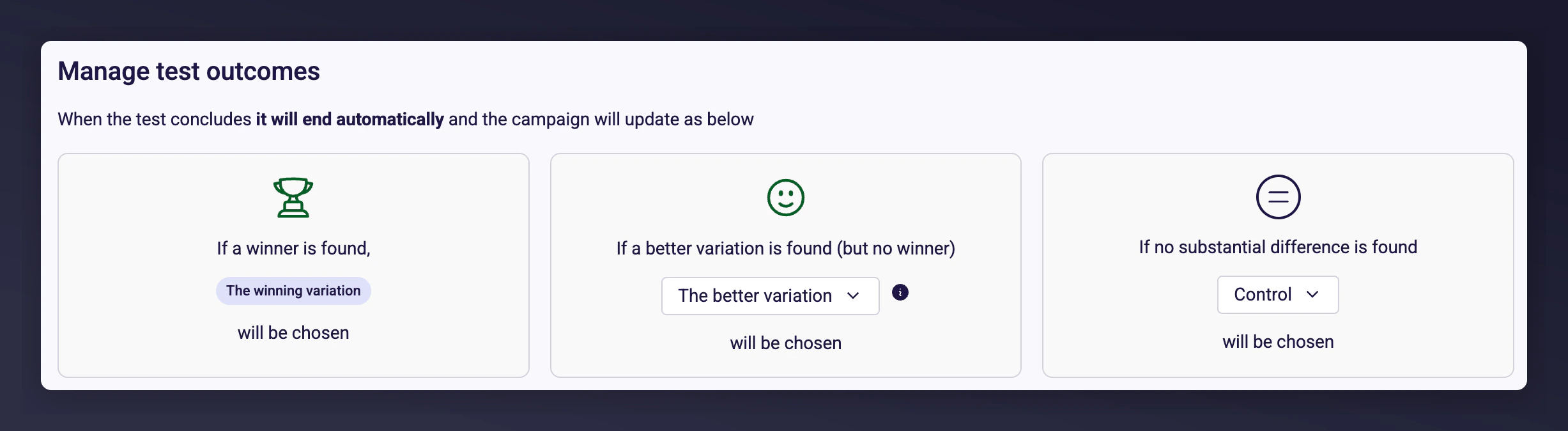

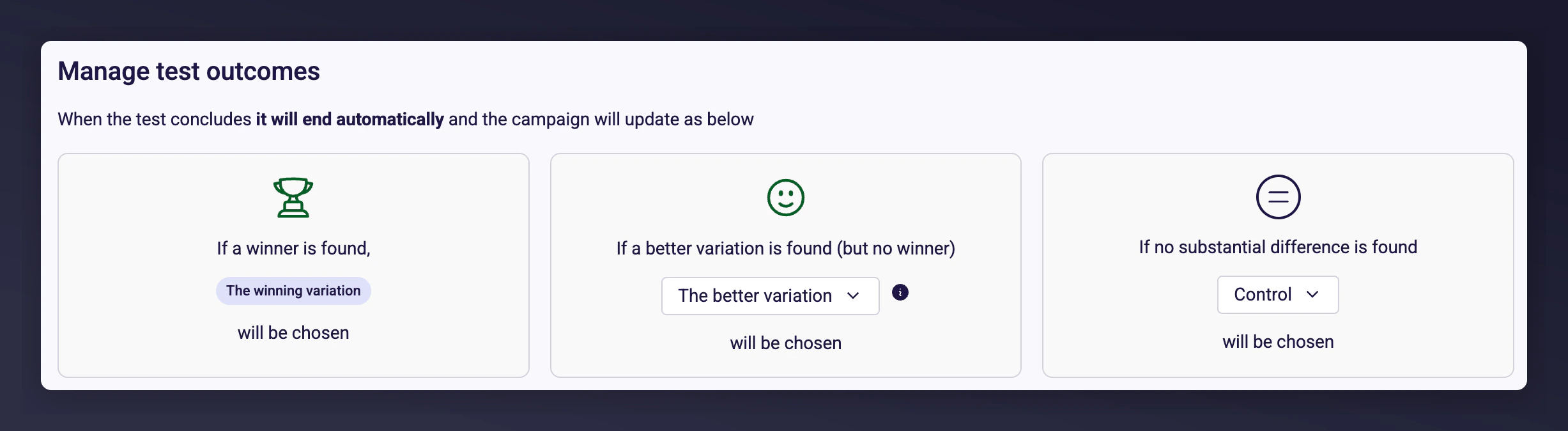

Check test outcomes

Control what happens when the test ends. By default:

- The test will auto-conclude after:

- 8 weeks

- Finding a statistically significant winner

- No meaningful difference over the campaign duration

- All traffic will be directed to the best-performing variant

Launch your test

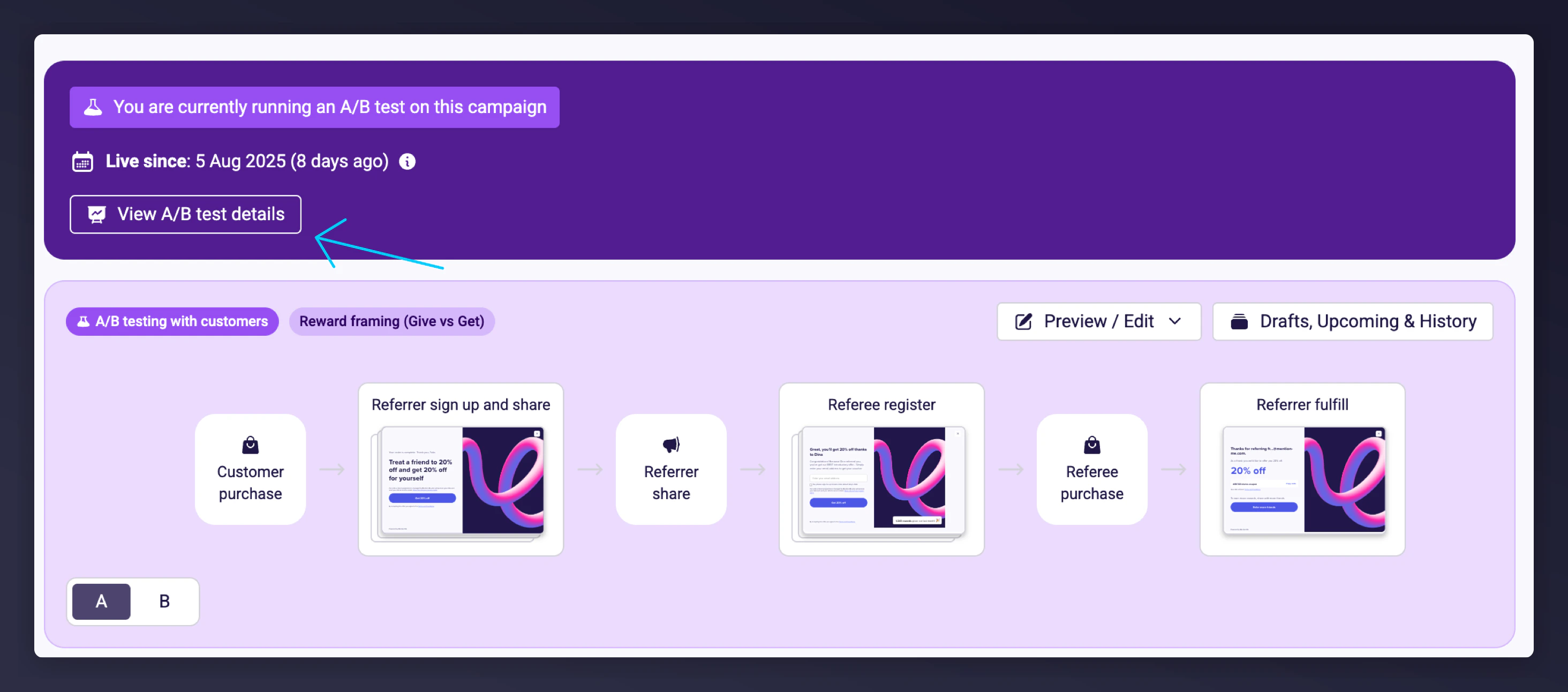

Click Run Test when you’re ready. Preview the final experience to see what each variation looks like post-purchase.After launch:

- The campaign page will show your live test

-

Click View A/B Test Details to monitor real-time performance